A magazine editor forwarded me a screenshot last month. A reader had pasted an LLM answer into an email and added: “This is incredible, it summarised your investigation in seconds.” The editor’s reply to the reader was polite. But the reality is painful: “This happens all the time, and we don’t even get the clicks.”

If you were Wile E. Coyote, this would be cue for the the AMCE anvil to fall on you.

“Ouch!”

That’s the new shape of the problem for publishers and journalists alike. It’s not only that journalism is being used to train LLMs or for generating answers. It’s that the receipt for that use (i.e. what was read, when, and how it shaped the answer) often doesn’t exist in a form that a publisher or freelancer can act on, nor benefit from.

Famous British economist Joan Robinson posited the emotional paradox decades ago: “the misery of being exploited… is nothing compared to the misery of not being exploited at all.”

It’s come back around today in AI search, and publishers are living both miseries at once: either the model doesn’t touch the open web (no credit, no traffic), or it reads widely and still doesn’t properly credit (no reliable receipts).

The measurable problem hiding inside the moral argument

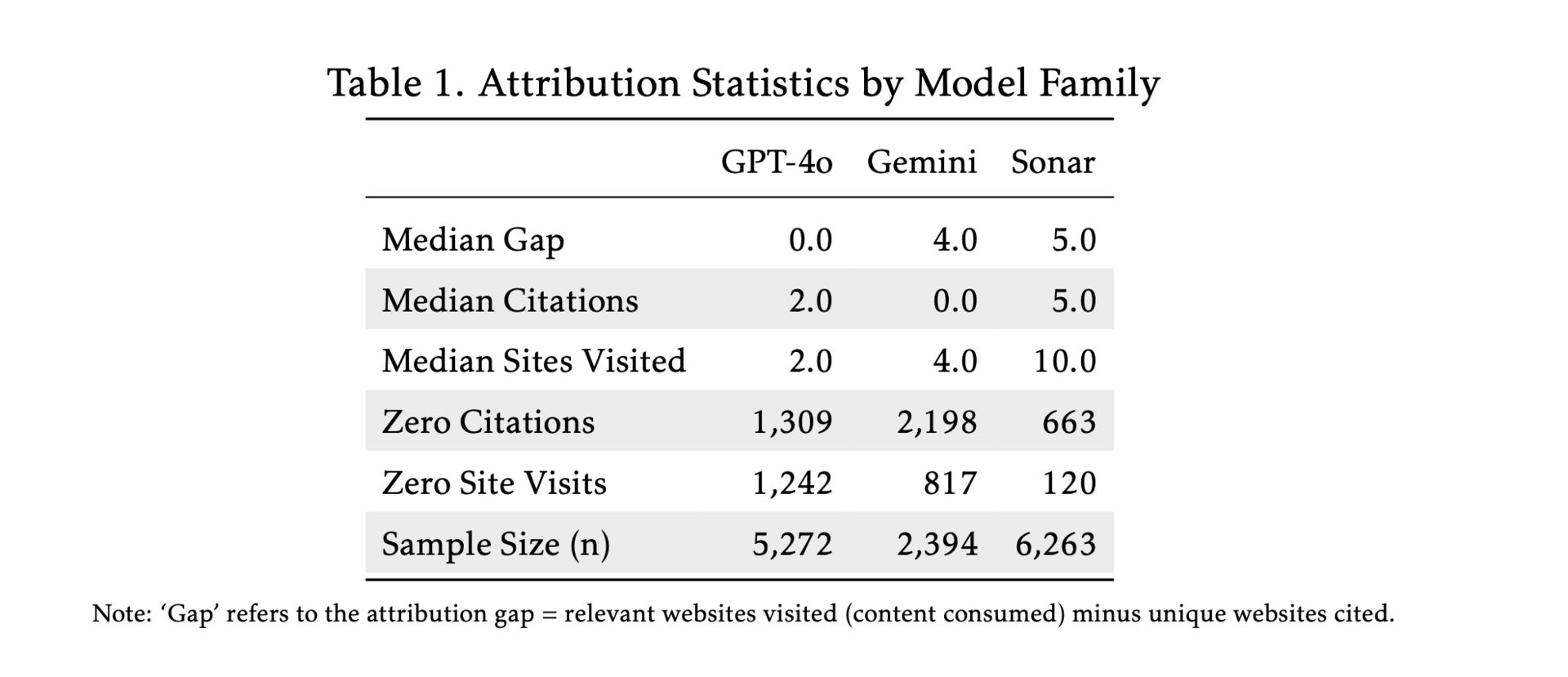

The sharpest attempt to quantify this comes from the Social Science Research Council’s AI Disclosures Project. Their working paper calls the phenomenon “ecosystem exploitation” and proposes a concrete metric: the “attribution gap,” defined as unique relevant pages visited minus unique pages cited.

That framing matters because it forces a difficult question:

if an AI system is going to ingest the entire web to answer users, what does it owe the web in return? Traffic, credit, payment, a verifiable trail?

To test the question, the SSRC team analysed ~14,000 real-world LMArena conversation logs (March-April 2025) from search-enabled systems, focusing on Google Gemini, OpenAI GPT-4o, and Perplexity Sonar.

What they found is striking. It’s three recurring patterns, each bad in its own way.

First problem: No Search.

Even in search-enabled contexts, the model answers without explicitly fetching online content; 34% of Gemini responses and 24% of GPT-4o responses in their dataset.

For publishers, “No Search” is a dead end: no visit, no credit, and no way to tell which reporting is being echoed.

Second problem: No citation.

In the SSRC sample, Gemini provided no clickable citation source in 92% of answers.

This is the point where “it’s complicated” starts to sound like strategy. If the system can browse, it can also disclose.

Third problem: High-volume, low-credit.

Perplexity’s Sonar visited roughly 10 relevant pages per query but cited only three to four.

It reads like a researcher but credits like a gossip queen.

The SSRC paper adds a more unsettling claim:

GPT-4o’s tiny attribution gap is best explained by selective log disclosure rather than genuinely better attribution.

If that’s right, it means a model can appear “well behaved” simply by choosing which footprints to show.

Reminds me of ‘Diesel-gate’, the diesel emissions scandal in 2015 with Audi and Volkswagen, where the software in the cars had a ‘defeat device’ which could tell when they were being tested or not, and would output different emissions results accordingly.

No bueno.

Training makes proof harder; search makes harm faster

Publishers tend to argue about “AI training” and “AI search” as if they’re the same fight. They’re not.

Training is where journalism is scraped from a source (or acquired from a pirate site), compressed into weights, and hard-coded as an input for a model to reference. Definitive proof becomes technically and legally messy.

Search (or retrieval) is where the product is actively reading the web right now, based on a user’s prompt, and where the commercial damage shows up quickly; because the model can answer without needing to send the user anywhere else.

This is why lawsuits have become both necessary and frustrating. One case can establish boundaries; it can’t easily create a scalable measurement system for an entire ecosystem.

Still, recent legal moves hint at where the weather may be heading. In Thomson Reuters v. Ross Intelligence, a U.S. judge granted summary judgment on infringement and rejected Ross’s fair use defence in the context of using Westlaw headnotes to build a competing legal research product.

And the U.S. Copyright Office’s May 2025 training report makes clear that some uses of copyrighted works in AI training may not be defensible as fair use, especially commercial uses that substitute for the original market.

But even if you win in court, you still face the operational problem:

how do you prove your work was used today, at scale, across systems you don’t control?

That’s the attribution crisis in business terms.

I had the pleasure of meeting one of the contributors to the SSRC paper, Isobel Moure, at the FIPP Congress in Madrid last October. She frames it simply:

“How can you track usage, and send a bill?”

The traffic layer is already shifting

Meanwhile, the distribution layer is moving under publishers’ feet.

Pew Research Center analysed the browsing behaviour of 900 U.S. adults in March 2025 and found that when users encountered a Google AI summary, they clicked a traditional search result in 8% of visits, versus 15% when no AI summary appeared.

That’s the funnel shrinking in real time. Those clicks are not coming back.

Then there’s the infrastructure cost that rarely makes it into editorials: bots. Imperva’s 2023 Bad Bot Report put bad bots at 32.1% of traffic in the U.S. and noted multiple countries where bad-bot levels exceeded the global average.

Thales’ write-up of Imperva’s 2024 findings reported that bots made up almost 50% of internet traffic, with bad bots around 32% in 2023.

The “AI” version of this is accelerating. Fastly cited TollBit reporting an 87% increase in AI bot traffic in Q1 2025.

Even if you treat that as one vendor’s measurement, the direction is clear: fewer humans, more machines, higher costs, thinner margins.

So the crisis goes beyond the moral appeal (“use my work fairly”).

It’s now operational (“show me the receipts”) and financial (“don’t hollow out the funnel while you raise my bandwidth bill”).

Next week, we’ll talk about fixes that don’t require every publisher to hire an expensive legal team, and that, more importantly, that don’t rely on AI labs to suddenly find a conscience.

Come back for part 2, as I outline what a workable licensing + measurement system looks like, and the ways it can fail, so you don’t get sold a fantasy.

If you found this useful, you’ll enjoy last week’s issue, where I review what Steve Jobs and Daniel Ek taught us about the fight against piracy, from their lessons in the music industry.

That’s all folks!