The Great Extraction and the Landowner’s Pivot

Imagine a small, independent magazine that covers a niche beat. Let’s say, maritime insurance fraud: shipping/freight fraud, sanctions evasion, offshore shell firms. Over years, the publication, usually staffed by experts in the field, builds a library of long-form articles: deep-dives investigations, trails of documents and evidence, interviews, corrections, etc.

Subscribers pay because the work saves them and their businesses expensive mistakes, and gives them an insight to their industry they might not otherwise get.

Now imagine that same editor notices something odd: readers emailing less, subscriptions stalling, and fragments of their reporting resurfacing inside AI answers. All paraphrased, reassembled, floating free of the original publication.

No attribution, no permission, no payment.

It makes the old cliché, “data is the new oil”, strangely more literal for journalism.

That odd feeling has a name. “Extraction”. For two decades, independent journalists and small publishers were treated as suppliers to other people’s oil fields of data: search, social, ad-tech. Generative AI pushes this extraction upstream. AI firms want the ‘high-fidelity’ truth buried in your archives: verified reporting, contextual insight, structured data.

You get no referral traffic. No new subscribers. No more ad-revenue. Just extraction.

The mistake is thinking this is a scraping problem. It’s a “drilling-rights” problem..

The “Data Cliff” and the Scarcity of Truth

The first boom came from easy oil: scrape the open web. But that frontier has limits. Epoch AI estimates that, if current trends continue, leading language models could fully use the stock of high-quality, publicly available human text between 2026 and 2032. PBS summarised the same finding bluntly: the “gold rush” for human-written training text has a horizon.

When the easy stuff runs down, the industry reaches for substitutes. Data-fracking, of sorts.

With AI, it means more model-generated text. Nature published research showing that recursive training on generated data can cause “model collapse” over time; outputs drift as the underlying data distribution shifts, resulting in more hallucinations and greater inaccuracies.

After the open web got ransacked, AI firms turned toward established reservoirs: publisher archives, paywalled libraries, specialist reporting. High-integrity long-form work carries what models struggle to manufacture: provenance, editorial judgment, a record of correction.

Scarcity matters because AI systems age poorly. When training loops start feeding on synthetic output, quality and diversity can degrade over generations. Researchers at Stanford and Rice universities call one version of this “Model Autophagy Disorder (MAD)”, a self-consuming loop where models get progressively worse without enough fresh real data.

Plainly, sloppy inputs produce sloppy systems. Garbage in, garbage out, as the old software adage goes. High-integrity reporting becomes premium feedstock, because it anchors models to reality, instead of to recycled, recursive slop.

Coverage gaps create a weird new advantage for smaller publishers, as narrow, high-density archives become strategically valuable when everyone else’s model keeps pumping out slop.

The Refinery: Turning Raw Text into “Sovereign Intelligence”

Oil doesn’t leave the ground as jet fuel. It gets refined and metered. Journalism has a similar problem: raw text travels well; context falls off the lorry.

This matters for long-form investigations precisely because context is the work. A serious investigation is a chain of custody: who said what, when, on what evidence, with what uncertainty, and what changed later. Strip that away and your reporting becomes generic “information” that can be repackaged with zero accountability.

The Reuters Institute’s “News Atom” project proposes a “metadata blueprint” designed for journalism in the AI era, with a machine-readable schema that can encode structured signals about verification, status, and fact-check dates.

In practice, that means keeping claims focused on sourcing, timestamps, and corrections as your work moves through automated systems.

Refining your archive means preserving that chain in forms AI systems can’t easily ignore, manipulate, or duplicate: structured metadata that says what is verified, what is alleged, what changed, and where claims originated. It becomes harder to launder a paragraph when the paragraph carries a provenance trail.

Cultural Capital: Informational Warfare and the “Style Moat”

Facts are one asset class. Voice is another. Tone, taste, and cultural literacy are further examples.

In an environment saturated with propaganda and synthetic persuasion, independent magazines don’t merely report events; they shape the vocabulary people use to interpret them. That influence is a form of cultural capital that AI models absorb when they learn how to “sound human.”

This is part of why archives are being courted. “Style data” widens a model’s expressive range and helps it imitate the social cues readers associate with authority and belonging. The lever for publishers is the same: treat this as an asset with terms, rather than simply a “vibe” that gets downplayed in negotiations with AI firms and siphoned off.

TIME made their market logic explicit. It announced a multi-year partnership with OpenAI in June 2024. Reuters reported the deal gives OpenAI access to TIME’s archive and that responses would cite and link back to TIME.com; financial terms were not disclosed.

If they pay $60-$70 million per year to Reddit, you can bet it’s a lucrative deal for Time as well.

The Legal Discovery Waterfall: Evidence of Market Substitution

Commercial harm shows up when attribution fails and readers stop clicking.

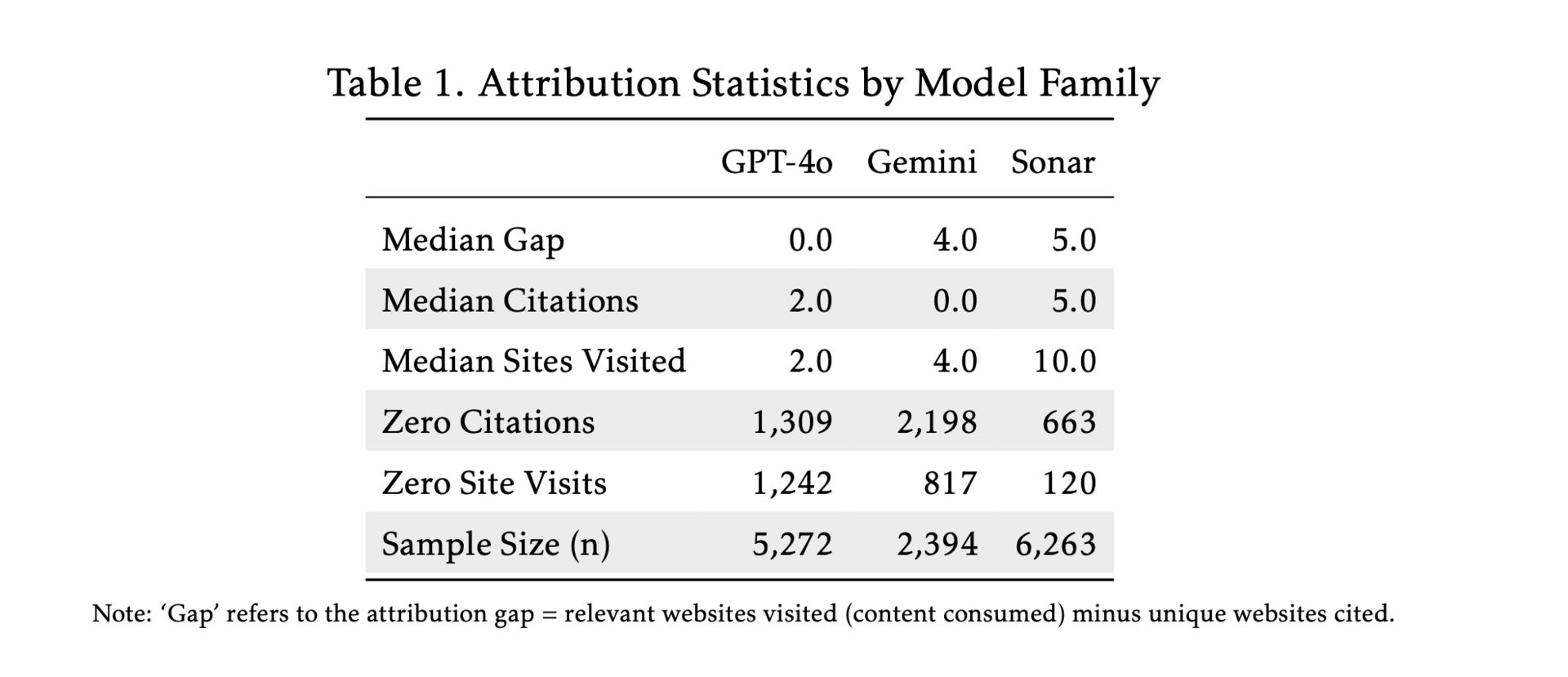

A June 2025 working paper from the AI Disclosures Project / SSRC analysed roughly 14,000 real-world conversation logs from search-enabled LLM systems and measured an “attribution gap”: relevant pages visited versus pages cited. We covered this in a previous edition, which you can read here.

In short, the authors found Gemini provided no clickable citation source in 92% of answers, and estimate Gemini and Perplexity’s Sonar leave about three relevant sites un-cited per typical query. They argue citation behaviour largely reflects design choices, not technical limits.

Discovery can turn suspicion into a paper trail. Bloomberg Law reported that, on January 5, 2026, US District Judge Sidney H. Stein affirmed an order requiring OpenAI to produce 20 million anonymised ChatGPT logs in the consolidated copyright litigation after OpenAI’s privacy objections failed. Jones Walker, a law firm analysis summarising the ruling, describes Stein affirming a magistrate judge’s order for the full 20-million-log sample.

This is the kind of evidentiary channel that can show, at scale, whether users are asking AI systems for “the latest exclusive” instead of reading it. Which is fine, only if OpenAI et al. pay for accessing and using the work.

The Attribution Gap.

Source: The AI Disclosures Project

The Collective Leverage Model: Why the Soloist Struggles

A freelancer cannot bargain with an industrial extractor, let alone with only screenshots and spreadsheets as the primary levers. Obviously. Transaction costs kill you first. In the unlikely event you have funds to engage a top legal team, lengthy time delays do the remainder of the damage.

Leverage starts with visibility: where your work appears inside AI answers and across the wider web, how often it appears, and how close the match is. Then comes coordination: shared evidence standards, shared bargaining, shared enforcement, repeatable licensing terms.

Monitoring → notice/enforcement → licensing.

Writers’ Bloc is one option. We exist to fill that gap. We help you identify your work reliably, mathematically prove authorship so that stands up to scrutiny, see where it turns up without permission, and keep licensing and payment manageable without turning your newsroom into a tech outfit. To be clear, we do not offer takedowns, legal advice, or 100% guaranteed detection, guaranteed removal, or guaranteed payment compliance.

We make it simple to get instant visibility of where and how often your work is showing up in places it shouldn't, and make it so that licensing and payments are made easy. All done for you in the background, so it’s simple to understand and manage.

For video-heavy libraries, Infactory AI is another route.

To join a collective group, look at Creative Licensing International.

Conclusion: Becoming the Baron

If you are angry and frustrated, it’s warranted. This extraction didn’t happen simply by accident. It happened because everyone treated archives like free raw material.

AI companies have already taken the easy crude. Now they need to frack the reserves that still deliver profits: verified archives with provenance, context, and editorial rigour. Your work.

Treat your archive as a governed asset, the way oil states treat a field: map it, meter it. Attach provenance and verification context. Measure misuse. Coordinate. Set terms for drilling rights. Alone if you can, collectively if possible.

If you know a publisher or freelancer doing serious work and sitting on years of reporting but still giving away drilling rights for free, send them this.

Ps. Many of our growing group of weekly readers are not yet subscribed, so please subscribe if you’re not already.

We don’t charge a fee to read our newsletter, instead we simply ask that you subscribe (and to share if you think it’s worth it). This is what allows us to continue bringing you the best insights from across the industry!